There aren’t many skills today more valuable than learning how to program. Not only are many jobs comprised entirely of coding, but a growing fraction of positions require some programming skill to do well.

As you learn to program, you’ll likely encounter a distinction made between ‘high-level’ and ‘low-level’ computer programming languages. Once you know what programming languages are trying to accomplish, the difference becomes much easier to understand.

A programming language is a means of talking to a computer in a way it can understand. Natural languages like English don’t work well because they contain so much subtlety and ambiguity. Instead, we build languages like Python or C that are more formal and rigid. This allows us to break tasks up into teensy little sets of instructions, which computers can execute rapidly.

All code ultimately ends up as ‘machine language,’ the 1’s and 0’s that actually tell a machine where to store things in memory, when to access them, and what operations to perform. How far away you are from the 1’s and 0’s determines whether a language is high-level or low-level language.

High-Level Programming Languages

High-level programming languages are relatively far away from machine language. The lower a language is, the more direct control you have over the computer, so high-level languages tend to give up a certain amount of that control in order to be easier to understand and use.

Ruby, for example, is one of the highest-level languages around. More than one person has noted that they could basically read Ruby code even without any programming experience at all!

This gives you some clue as to the applications for which high-level languages are best suited. They are useful when some hardware performance can be sacrificed to make human programmers more productive. Web development is a good example.

Low-Level Languages

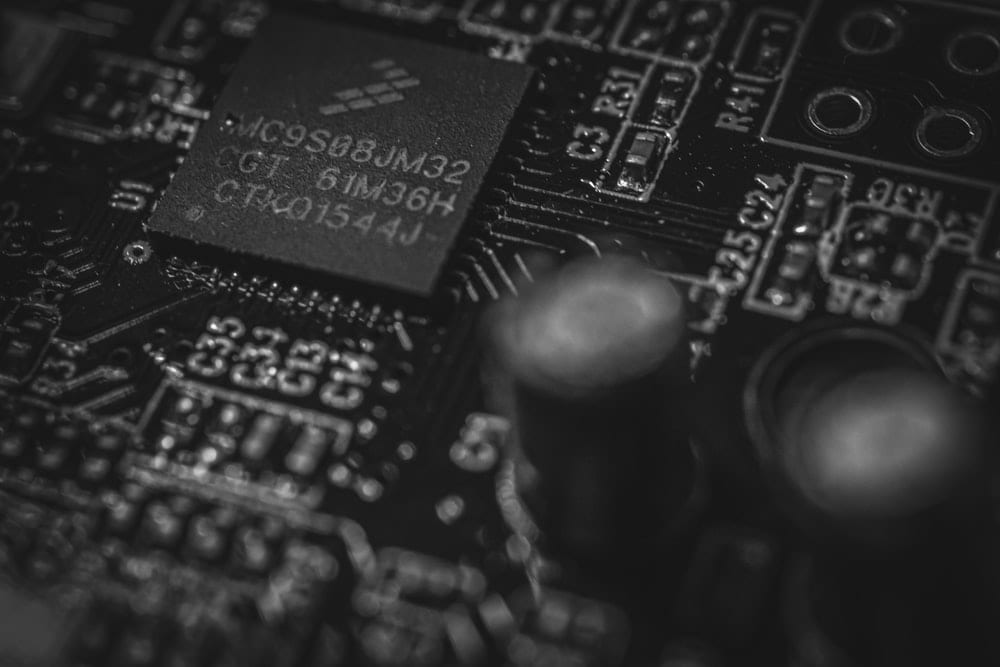

Low-level languages are relatively close to machine language. Writing in low-level languages means that you more often have direct control over tasks like memory management. The further down you are, the harder it tends to be for people to understand the problem and write the code.

Machine language, of course, is as low as you can get. But thinking of tasks as strings of 1’s and 0’s is nearly impossible, so we instead use a language like Assembly, which is only a few layers above the actual electrons on the circuit board.

C is a common example of a low-level language. Its syntax is a lot more arcane than Python’s, but it’s more useful if you want to optimize hardware performance. Graphics programming and certain kinds of high-performance computing (HPC) are domains where you’re likely to be using a low-level language.

High-Level vs. Low-Level Programming Languages: Which Should I Learn?

As is so often the case, it really depends on what you’re trying to do. As discussed in the previous two sections, high-level and low-level languages are distinguished in part by the kinds of trade-offs they make.

High-level languages are generally easier to learn but give you less control over the computer. Low-level languages tend to be the exact opposite: harder to learn but give more control over the computer.

If you’re looking to ratchet a game’s graphics up to 11, you’ll probably need to be ‘hanging’ right over the graphics card, manipulating it with a low-level language. This micromanaging is almost never required to put up a website, so in that domain you’ll almost certainly be using a high-level language.

Both kinds are worth learning but should be prioritized based on your goals and your personal experience. Fortunately, there are plenty of great resources like coding bootcamps that can help you achieve your personal and professional goals.

About us: Career Karma is a platform designed to help job seekers find, research, and connect with job training programs to advance their careers. Learn about the CK publication.